End-to-End vs Integration Testing: Key Differences

Learn with AI

End to end and integration testing are both critical in creating comprehensive test automation, yet they offer different focuses and scopes. In this article, we’ll guide you through the fundamentals and key differences of these two types of testing.

End-to-end testing in software development

End-to-end (E2E) testing is a software testing technique that verifies the software functionality in its entirety from start to finish. It involves testing a complete workflow, starting from the user's entry point to the application's final output, and ensuring that the system functions correctly as a unified entity.

In end-to-end testing, testers replicate live data to simulate real-world user scenarios, covering all the steps that a user would take to perform a particular task. For example, in a shopping application, end-to-end testing would involve testing the login functionality, user registration, shopping cart, checkout, and payment processing.

Such user flow can appear to be linear and straightforward on the interface, but under that surface are hundreds of components communicating with one another, from APIs, databases, to networks and third-party integrations. End to end testing aims to verify the application’s flow of information, including all related paths and dependencies.

End-to-end testing objectives

The main purpose of E2E testing is to validate that all the system components can run under real-world scenarios. This can be broken down into:

- Checking the system flow: By mimicking a step-by-step experience, E2E tests can verify every touchpoint with the application from the end user’s perspective. Through this method, testers can gain insights into how the software behaves throughout a user journey.

- Verifying subsystems and layers: Applications are built on complex infrastructure consisting of various moving components. E2E testing is to ensure the compatibility of all these layers, between the front-end UI to the underlying API, and between internal and external systems.

End-to-end testing example

In this scenario, a customer searches for available rooms on the hotel booking website. He then selects a room, books it, and verifies that the booking is successful. Below are the possible test cases:

- Verify if the search bar brings up available rooms.

- Ensure the search results display the correct information (e.g., room type, availability, price).

- Verify if the customer can select a room and add to cart.

- Ensure the customer can enter their personal and payment information correctly.

- Verify the payment feature.

- Check if the customer receives a confirmation email.

- Verify that the email contains the correct booking details (e.g., room type, dates, price, reference number).

- Check if the booking is listed in the booking history.

In this example, end to end testing covers the entire booking process on the hotel website, from searching for available rooms to receiving a confirmation email. It also verifies that the website elements (search bar, payment gateway, email notification) meet all functional requirements and work seamlessly with each other.

Approaches to end-to-end testing

There are 2 approaches to performing end to end testing.

Horizontal

Horizontal E2E testing is done from the viewpoint of users, where the focus is on simulating specific use cases and navigating through them from beginning to end. This involves every customer-facing aspect of the application such as the user interface or application logic.

For example, in a shopping site, horizontal E2E testing may involve testing the checkout process, from selecting a product to completing the purchase. It would be used to verify that the product is added to the cart, customer information is collected, the payment is processed correctly, and the order is confirmed.

Vertical

Vertical E2E testing is a more technical approach that focuses on testing in layers or levels following a hierarchal order. Individual layers of the application are tested from top to bottom. Vertical testing is often used to evaluate complex computing systems that do not involve interfaces such as databases or API calls.

Pros and cons of end-to-end testing

Pros

- Expanded test coverage: End to end testing stretches beyond unit testing and integration testing to verify all software functions, from the API to the UI level. Various dependencies such as third-party integrations, services, or databases are also ensured to cohabit without any conflicts.

- Software quality across environments: E2E testing verifies the front end, ensuring compatibility across different browsers, devices, and platforms. Cross-browser testing is frequently performed for this purpose.

- Reduced redundant tests: As E2E testing is performed on composite scenarios, use cases, and workflows, it eliminates the need to repeatedly test individual components. If a defect is found to affect the interaction between two modules, there is no need to retest each module once the issue is fixed.

Read more: Top 10 Best End-to-End Testing Tools and Frameworks

Cons

- Resource-consuming: Mapping complete user journeys following the BDD approach can take an extensive amount of time, especially for apps with complex architecture. However, this can be aided with test automation tools that assist QA teams in test creation, execution, and maintenance.

- Complex environment setup: Setting up and maintaining a production-like environment can be challenging with lots of micro-services, local and virtual machines. Adopting an on-cloud testing system that supports a broad range of environments can be a more cost-effective alternative.

- Heavy maintenance: Modifications to one component may require corresponding updates to multiple end-to-end test cases. Managing test scripts, test data, and test environments to reflect changes can become complex, especially when dealing with frequent updates or agile development practices.

Integration testing in software development

After the completion of unit testing and prior to end-to-end testing is integration testing, where multiple modules are tested as a single unit. Software typically comprises various modules built by individual developers. Each code unit may have been developed with specific requirements, functionalities, and constraints in mind, and their combination may result in unexpected behaviors or errors.

Integration testing involves testing the interfaces between the modules, verifying that data is passed correctly between the modules, and ensuring that the modules function correctly when integrated with each other.

Read more: Unit Testing vs Integration Testing: What are the key differences?

Integration testing objectives and examples

The objective of integration testing is to put all the modules together in an incremental manner and ensure that they work as expected without disturbing the functionalities of other modules

In the case of a ride-hailing application, below are some possible integration test cases:

- Testing the integration between the UI and the GPS system: The app should also be able to display the estimated arrival time of the driver based on the user's location.

- Testing the interaction between the app and the driver management system: When a user requests a ride, the driver management system is able to assign a nearby driver and send them the user's location. The app should also be able to display the driver's information, such as their name, photo, and car model.

- Verify the payment system: This involves testing that the app is able to process the payment for the ride and deduct the correct amount from the user's account. The app should also be able to provide the user with a receipt and trip details.

Read More: Top Integration Testing Tools You Should Know

Approaches to integration testing

Integration testing can be done using many different approaches, with the 2 most common being the big bang and the incremental approach. In the first approach, all the components are integrated all at once, and then the entire system is tested as a whole. The Incremental approach, on the other hand, breaks down the software into manageable and logically related modules and tests each bundle before integrating them into the complete system.

The incremental approach is further divided into 2 methodologies:

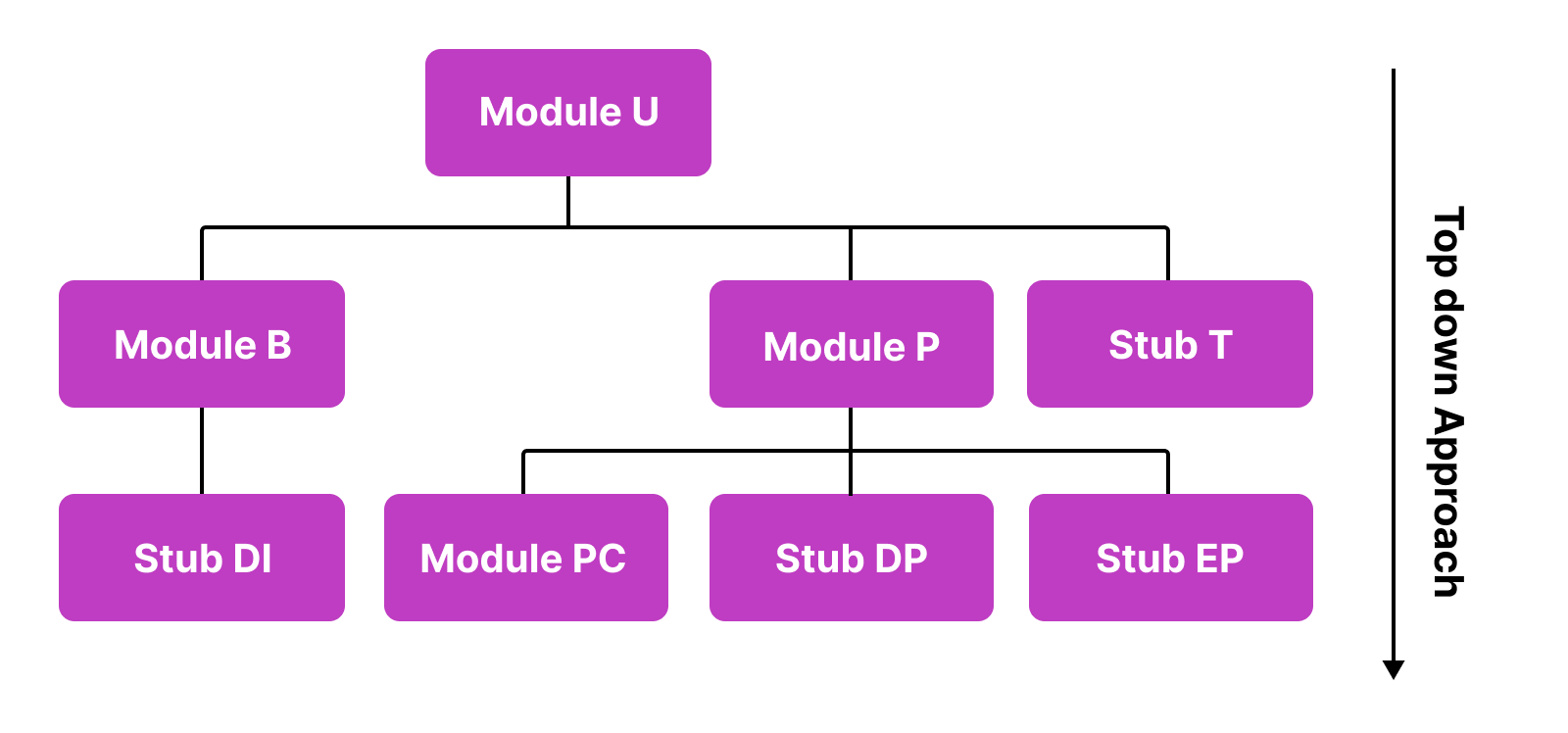

Top-down

A top-down approach involves testing the higher-level components of a software system before testing the lower-level components. Developers often use stubs to simulate interfaces between the unavailable or underdeveloped lower lever modules.

For example, the ride-hailing application above can include these modules:

- Module A: User Authentication

- Module B: Ride Booking

- Module Driver Information

- Module P: Payment Processing

- Module Payment-by-cash/ PC

- Module Debit Card/Credit Card Payment aka DP (Yet to be developed)

- Module E-Payment/ EP (Yet to be developed)

- Module T: Ride Tracking

For an incremental approach, the following test cases can be delivered:

- Test Case 1: Integrate and test Module A and Module B

- Test Case 2: Integrate and test Modules A, B, and T

- Test Case 3: Integrate and test Module A, B, T, and P

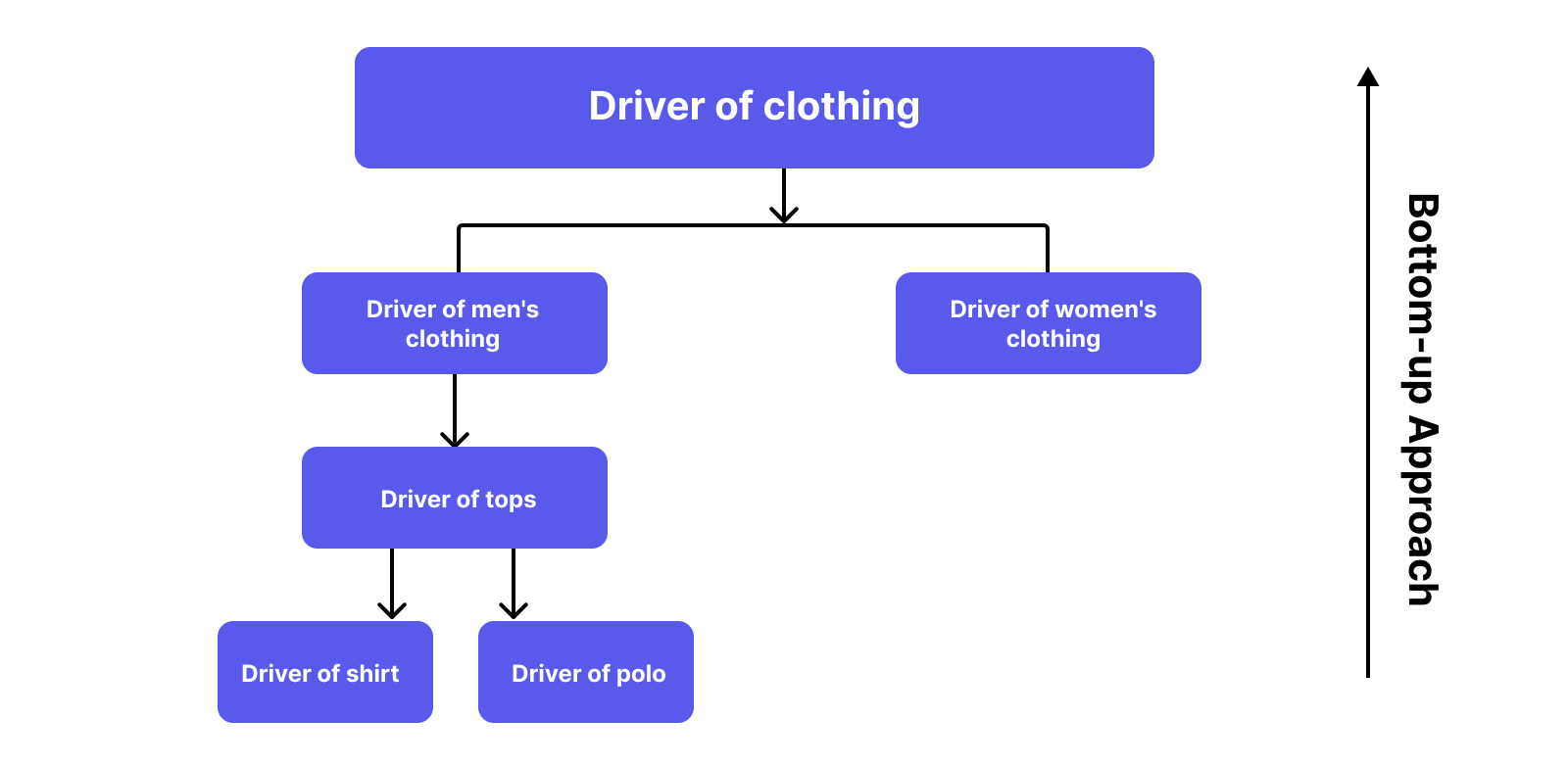

Bottom-up

In this method, testing lower-level modules is prioritized, followed by the higher-level modules. This ensures the reliability of the foundational modules before moving to higher-level ones.

In case the top modules are not in a ready state for testing, testers can use “drivers” as a replacement so that the testing can be continuous even when the application is not complete.

Here is an example to help you understand better:

Modules of clothing, clothes for men, clothes for women, tops, polo, and shirt are under development. Hence we can substitute them with related Drivers:

- Test Case 1: Conduct Unit testing for the Modules Polo and Shirt.

- Test Case 2: Integrate and test Modules Tops-Polo-Shirt.

- Test Case 3: Integrate and test Modules Clothes for men-Tops-Polo-Shirt.

- Test Case 4: Conduct Unit testing for Module Clothes for Women.

- Test Case 5: Integrate and test Modules Clothing-Clothes for women-Clothes for men-Tops-Polo-Shirt.

Pros and cons of integration testing

Pros

- Integration testing provides a systematic method for connecting a software system while performing tests to detect issues related to interfacing such as inconsistent code logic or erroneous data.

- Integration tests help identify performance bottlenecks that arise with increased data volumes, higher processing loads, or resource limitations when components are combined

- With the use of stubs and drivers to substitute for underdeveloped modules, it doesn't require waiting until all system modules are coded and unit tested before initiating integration testing.

Cons

- The variety of components involved (e.g., platforms, environments, databases) adds to the complexity of integration testing

- In the case of the big bang approach, where all modules are tested all at once, critical modules which may be prone to defects are not isolated and tested on priority.

End-to-End Testing vs. Integration Testing

End-to-End Testing | Integration Testing | |

Purpose | Validates system behavior in real-world scenarios | Validates integration between components |

Scope | Broader in scope and cover the entire technology stack of the application | Interaction between different components/modules |

Cost | More expensive as it often requires more resources, including personnel, equipment, and testing environments. | Less expensive than end-to-end testing |

Testing stage | Performed at the end of the software development lifecycle before releases | After unit testing and before end-to-end testing |

Technique | Black-box testing, often uses User Acceptance Testing (UAT) | White-box testing, often uses API testing

|

Read More: What is Software Testing? Full Guide

End-to-end and integration testing: Are They Complementary or Interchangeable?

With their differences in scope, focuses and approaches, these two types of testing can not be used interchangeably. End-to-End Testing and Integration Testing are complementary in the sense that they provide different levels of testing.

By performing Integration Testing first, any issues related to the interaction between different components can be identified, ensuring that the system functions as a whole, without any integration bottlenecks. End-to-End Testing then helps check if the software meets the requirements and specifications in real-world scenarios.

Both types of testing have a high level of complexity with the involvement of various modules, APIs, and systems, which makes them fine candidates for test automation.

While manual tests still remain necessary, they are not fully exhaustive. When it comes to large or complex systems, it may not be possible to execute every test case and cover all potential scenarios. Another challenge is providing a stable environment for end-to-end/integration testing. Humans are prone to making mistakes and it takes only one wrong click to derail a testing scenario.

Automated testing platforms can be leveraged to compensate for these limitations, ensuring more accurate, efficient, and stable E2E and integration tests.

Katalon for end-to-end and integration testing

Katalon is a comprehensive software quality management platform that supports a wide range of testing types. Katalon enables UI and functional automated testing for web, API, desktop, and mobile applications, all in one platform.

Below highlight some of the features that help simplify automated integration and end-to-end testing.

Easy test creation with record-and-playback

Writing code simulating user journeys typically requires programming, coding, and VBA (Visual Basic for Applications) knowledge, which can be a scary endeavor for people not familiar at all with software development.

Katalon's record-and-playback enables translating complex testing scenarios into simple, repeatable steps. Both technical and non-technical testers can design tests by interacting with the UI just like a regular user, the Recorder watches and records all your actions so that you can focus on creating the user journey itself.

Web API testing

Katalon provides robust support for API testing, including the ability to test GraphQL, REST, and SOAP APIs. Its integrations with GraphQL tools like Postman, Swagger, and SoapUI allow you to import existing API specifications and test your new API schema along with legacy API assets on one platform.

Read more: What is GraphQL Testing? How To Test GraphQL APIs?

On-cloud test execution for wide coverage

Compatibility testing across various devices, browsers, and OSs can be challenging, especially when it comes to setting up physical environments to execute tests. This process can be laborious and delay the entire testing project.

With Katalon, you can easily run tests on local and cloud browsers, devices, and operating systems in parallel, ensuring comprehensive test coverage.

Supported Page-object model to lower maintenance effort

Test maintenance is one of E2E testing's most daunting tasks. Larger regression test suites, extended test flows, and constant UI changes can make maintenance a time-consuming responsibility.

Following the page object design model, Katalon provides a centralized repository for not only designing but also managing your test cases and their artifacts. All UI elements, objects, and locators are stored across tests in an object repository. When your UI changes, clicks are all it takes to get your automation scripts up and running again.

Solving locator flakiness with Self-healing

Test cases replicating real user scenarios can become unstable due to the complex nature of usage scenarios. Failed tests prevent testers from gaining valuable insights as the root causes may not reflect the AUT’s real performance.

Katalon supports the self-healing mechanism to tackle the issue of broken locators during execution. When the default locator fails, new and more maintainable ones are automatically produced for the same object to avoid flakiness when the web UI changes.

|

FAQs

What is end-to-end (E2E) testing?

E2E testing verifies an application from start to finish as a complete workflow, simulating real user journeys (e.g., login → cart → checkout → payment → confirmation) and validating the full flow of data across UI, APIs, databases, networks, and third-party services.

What is integration testing?

Integration testing checks whether multiple modules/components work correctly when combined, focusing on the interfaces and data exchanged between them (e.g., UI ↔ GPS, booking ↔ driver management, payment processing in a ride-hailing app).

When should you run integration testing vs E2E testing in the SDLC?

Integration testing typically happens after unit testing and before E2E testing. E2E testing is usually performed later—often near the end of the lifecycle before release to confirm real-world workflows.

What’s the main difference in scope?

-

Integration testing: narrower—validates interactions between components/modules.

-

E2E testing: broadest—covers the entire tech stack and complete user flows across systems.

What are common approaches to integration testing?

Two common strategies are:

-

Big bang: integrate everything at once and test as a whole.

-

Incremental: integrate and test in logical chunks (often top-down using stubs or bottom-up using drivers).

What are horizontal vs vertical E2E testing?

-

Horizontal E2E: user-perspective testing of complete customer-facing flows (e.g., full checkout).

-

Vertical E2E: more technical, layer-by-layer validation from top to bottom, often emphasizing backend layers like APIs/databases even without much UI.

Are E2E and integration testing interchangeable?

No, they’re complementary. Do integration testing first to catch module interaction issues early, then use E2E testing to confirm the system meets requirements in real-world scenarios. Both can be complex and are strong candidates for automation (especially to reduce human error and improve repeatability).